YOLO, short for You Only Look Once, changed how many engineers think about object detection. Earlier detection systems often used multi-stage pipelines that proposed regions first and classified them later. YOLO reframed detection as a direct prediction problem: take an image, run one forward pass, and predict bounding boxes plus class scores quickly enough for real-time use.

That design choice made YOLO especially attractive in robotics, video analytics, and autonomous systems, where latency matters as much as raw accuracy.

What problem YOLO solves

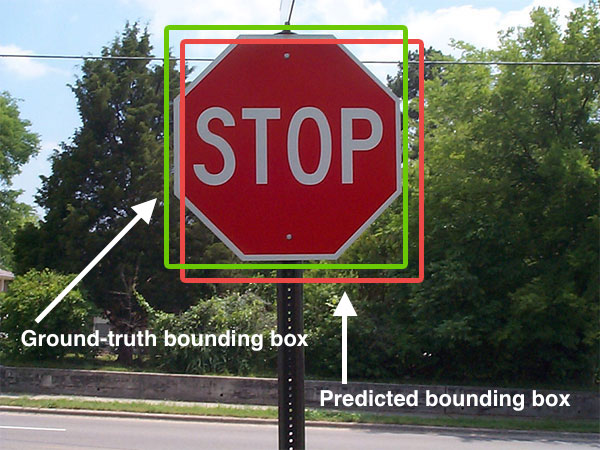

Image classification answers a simple question: what is in this image? Detection answers a harder one: what objects are present, where are they, and which class belongs to each box?

That difference matters in real systems. A vehicle or robot does not only need to know that a scene contains a pedestrian. It needs to know where the pedestrian is, how that person moves across frames, and whether the detection is stable enough to influence planning.

YOLO became popular because it made this detection step fast and practical at scale.

Why YOLO became influential

There are three main reasons YOLO spread so widely:

- Speed: real-time inference made it attractive for video and edge deployment.

- Simplicity: a single unified detector was easier to explain and deploy than older multi-stage systems.

- Strong engineering ecosystem: later implementations and tooling made training, exporting, and deployment more accessible.

Over time, the YOLO family evolved a lot. Anchor strategies changed, backbones improved, post-processing changed, and modern variants added tasks such as segmentation, pose, tracking, and oriented boxes. But the core identity remained: detection should be fast enough to use in real systems.

How YOLO works at a practical level

Conceptually, YOLO takes an image and predicts object locations and categories in one inference path. A modern pipeline usually includes:

- image resize and normalization

- feature extraction with a backbone network

- multi-scale detection heads

- confidence scoring and class prediction

- post-processing to remove duplicate boxes

The exact architecture depends on the version you use, but the operational idea is stable: produce detections quickly enough for downstream systems to react.

from ultralytics import YOLO

model = YOLO("yolo11n.pt")

results = model("street_scene.jpg")

for result in results:

for box in result.boxes:

print(box.cls, box.conf, box.xyxy)

The code above looks simple, but the real engineering work is often around dataset quality, deployment constraints, label consistency, camera setup, and tracking across frames.

Where YOLO fits in a larger system

YOLO is rarely the whole perception stack. In a deployed system it usually feeds into something larger:

- multi-object tracking

- sensor fusion

- risk estimation

- behavior planning

- alerting or actuation logic

For example, in an autonomous-driving context, YOLO-style detection may identify vehicles, pedestrians, bikes, and traffic cones. But planning still needs temporal tracking, motion prediction, and safety rules before it can turn those detections into driving decisions.

What YOLO is especially good at

YOLO tends to work well when you need:

- fast detection on live video

- compact deployment on edge hardware

- simple integration into monitoring or robotics pipelines

- good tradeoffs between speed and accuracy

This is why it appears so often in drones, traffic monitoring, warehouse robots, industrial safety, and smart-camera systems.

Where YOLO is not enough by itself

Even a strong detector has limits. YOLO alone does not solve:

- precise depth estimation

- fine-grained pixel segmentation

- long-term tracking identity under heavy occlusion

- full scene understanding for planning

- robust performance under severe domain shift without retraining

That is why practical systems usually pair detection with tracking, segmentation, map context, or other sensors.

Real engineering concerns

If you plan to deploy YOLO in a real product, the hard questions are usually not about the marketing benchmark. They are about:

- label quality and class definitions

- how often false positives appear in safety-critical scenes

- how small distant objects can be while still being detected

- latency on the exact target hardware

- nighttime, rain, motion blur, or camera vibration

- monitoring drift after deployment

In many teams, the biggest performance gains come from better data and better deployment choices, not from chasing a new model name every week.

Conclusion

YOLO became influential because it made object detection fast, practical, and easy to integrate into real systems. It remains a strong choice when engineers need real-time perception on images or video. But the best way to use YOLO is to treat it as one reliable module inside a broader perception stack, not as the full system by itself.

References

- Ultralytics YOLO documentation: https://docs.ultralytics.com/

- Ultralytics prediction docs: https://docs.ultralytics.com/modes/predict/

- Original YOLO paper listing in Ultralytics docs: https://docs.ultralytics.com/