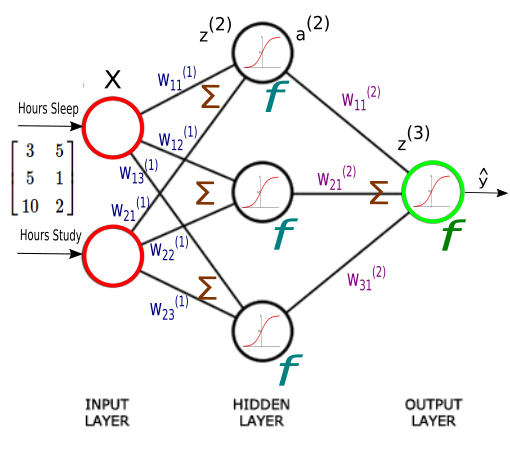

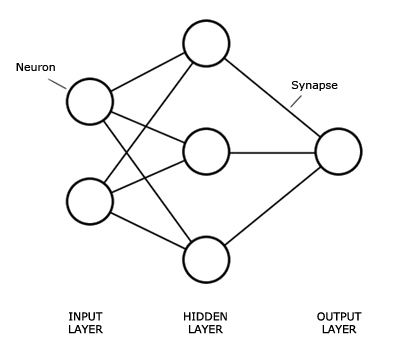

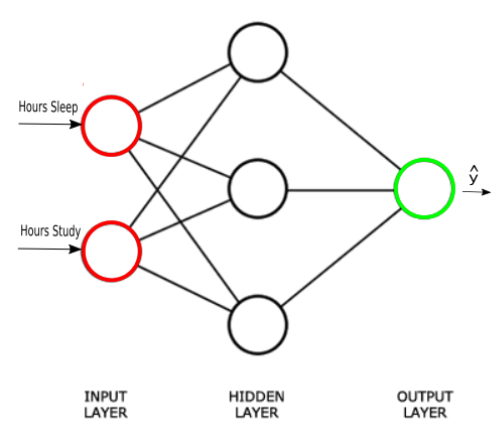

The Neural network structure is: one, input layer, one output layer, and layers between are hidden layer.

Introduction

An Artificial Neural Network (ANN) is an interconnected group of nodes, similar to the our brain network.

Here, we have three layers, and each circular node represents a neuron and a line represents a connection from the output of one neuron to the input of another.

The first layer has input neurons which send data via synapses to the second layer of neurons, and then via more synapses to the third layer of output neurons.

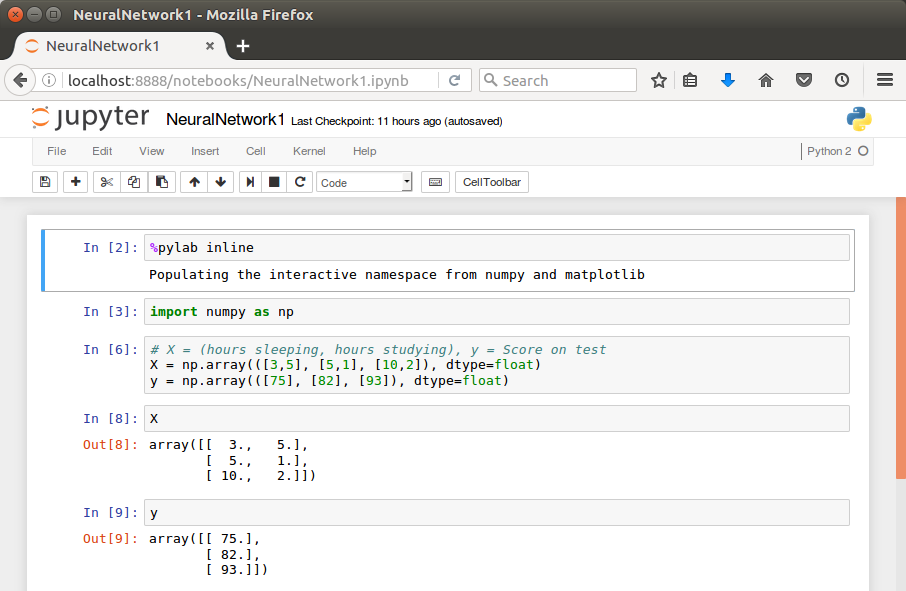

Jupyter Notebook

This tutorial uses IPython’s Jupyter notebook.

If you don’t have it, please visit iPython and Jupyter – Install Jupyter, iPython Notebook, drawing with Matplotlib, and publishing it to Github.

This tutorial consists of 7 parts and each of it has its own notebook. Please check out Jupyter notebook files at Github.

Reference

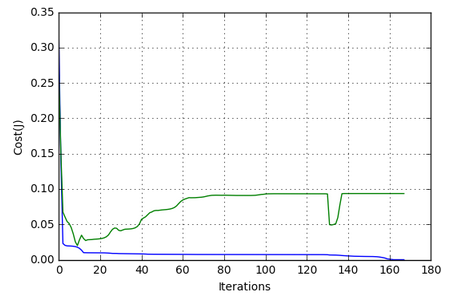

We’ll follow Neural Networks Demystified throughout this series of articles.

Problem

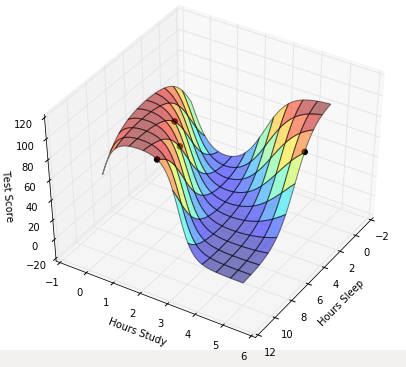

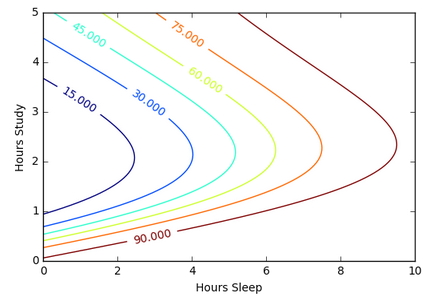

Suppose we want to predict our test score based on how many hours we sleep and how many hours we study the night before.

In other words, we want to predict output value yy which are scores for a given set of input values XX which are hours of (sleep, study).

| XX (sleep, study) | y (test score) |

|---|---|

| (3,5) | 75 |

| (5,1) | 82 |

| (10,2) | 93 |

| (8,3) | ? |

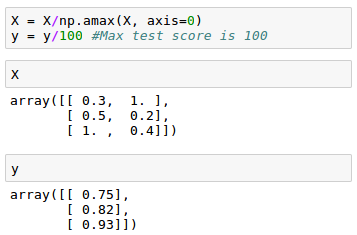

In our machine learning approach, we’ll use the python to store our data in 2-dimensional numpy arrays.

We’ll use the data to train a model to predict how we will do on our next test.

This is a supervised regression problem.

It’s supervised because our examples have outputs(yy).

It’s a regression because we’re predicting the test score, which is a continuous output.

If we we’re predicting the grade (A,B, etc.), however, this is going to be a classification problem but not a regression problem.

We may want to scale our data so that the result should be in [0,1].

Now we can start building our Neural Network.

We know our network must have 2 inputs(XX) and 1 output(yy).

We’ll call our output y^y^, because it’s an estimate of yy.

Any layer between our input and output layer is called a hidden layer. Here, we’re going to use just one hidden layer with 3 neurons.

Neurons synapses

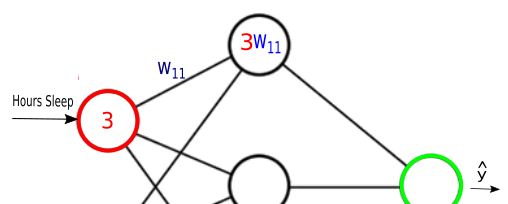

As explained in the earlier section, circles represent neurons and lines represent synapses.

Synapses have a really simple job.

They take a value from their input, multiply it by a specific weight, and output the result. In other words, the synapses store parameters called “weights” which are used to manipulate the data.

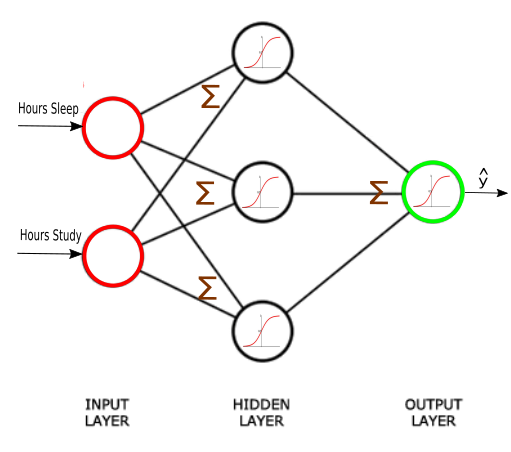

Neurons are a little more complicated.

Neurons’ job is to add together the outputs of all their synapses, and then apply an activation function.

Certain activation functions allow neural nets to model complex non-linear patterns.

For our neural network, we’re going to use sigmoid activation functions.